By Russell Goldman, Founder of Buildable Engine · May 2026

Scott Galloway recently argued, convincingly, that the AI job apocalypse is a marketing strategy rather than an economic forecast. Writing in his newsletter No Mercy / No Malice, he put it plainly: "The AI job apocalypse isn't data-driven — it's narrative-driven, engineered by people who profit when you're scared. Fear is the product. Capital is the outcome."¹

He is right about the narrative. But there is a layer underneath it that his analysis doesn't reach. It is particularly visible from inside architecture, engineering, and construction, and it has consequences for how capital gets allocated to the technology companies that are supposed to fix these industries.

The problem is not just that the narrative is overblown. It is that the narrative is wrong in a specific way that is doing specific damage: to talent markets, to capital markets, and to the category of AI that actually belongs in these industries.

On a recent episode of Scaffold, the Architecture Foundation's podcast featuring architects from Herzog & de Meuron, Foster + Partners, and Zaha Hadid Architects discussing how AI is entering their studios, Shajay Bhooshan of ZHA made an observation worth sitting with. The concern in architecture right now is not that AI will replace designers. It is that the hype around AI is pulling the best talent out of architectural practice and into technology. Not because the profession has been automated, but because the narrative has made it feel like it's about to be. Firms are losing their most technically sophisticated people to AI companies, to software startups, to the hyperscalers building the models everyone is afraid of.²

The data on this is striking. More than half of AIA firms have already experimented with AI, yet only 6% have integrated it into active workflows.³ The gap between experimentation and deployment isn't a technology problem. It is a talent and judgment problem. The people who would know how to evaluate, select, and deploy the right systems are increasingly the people who left.

The result is a version of displacement that nobody planned for. Firms that lose their top technical talent don't find themselves leaning on AI because it's ready. They find themselves leaning on AI reluctantly, as a substitute for people who left. People who were drawn toward tech not by the reality of what AI can do in architectural practice, but by the story being told about it.

This is what an unchecked narrative actually costs. Not the jobs it predicts will disappear. The jobs it causes to disappear prematurely, through the self-fulfilling weight of the story itself.

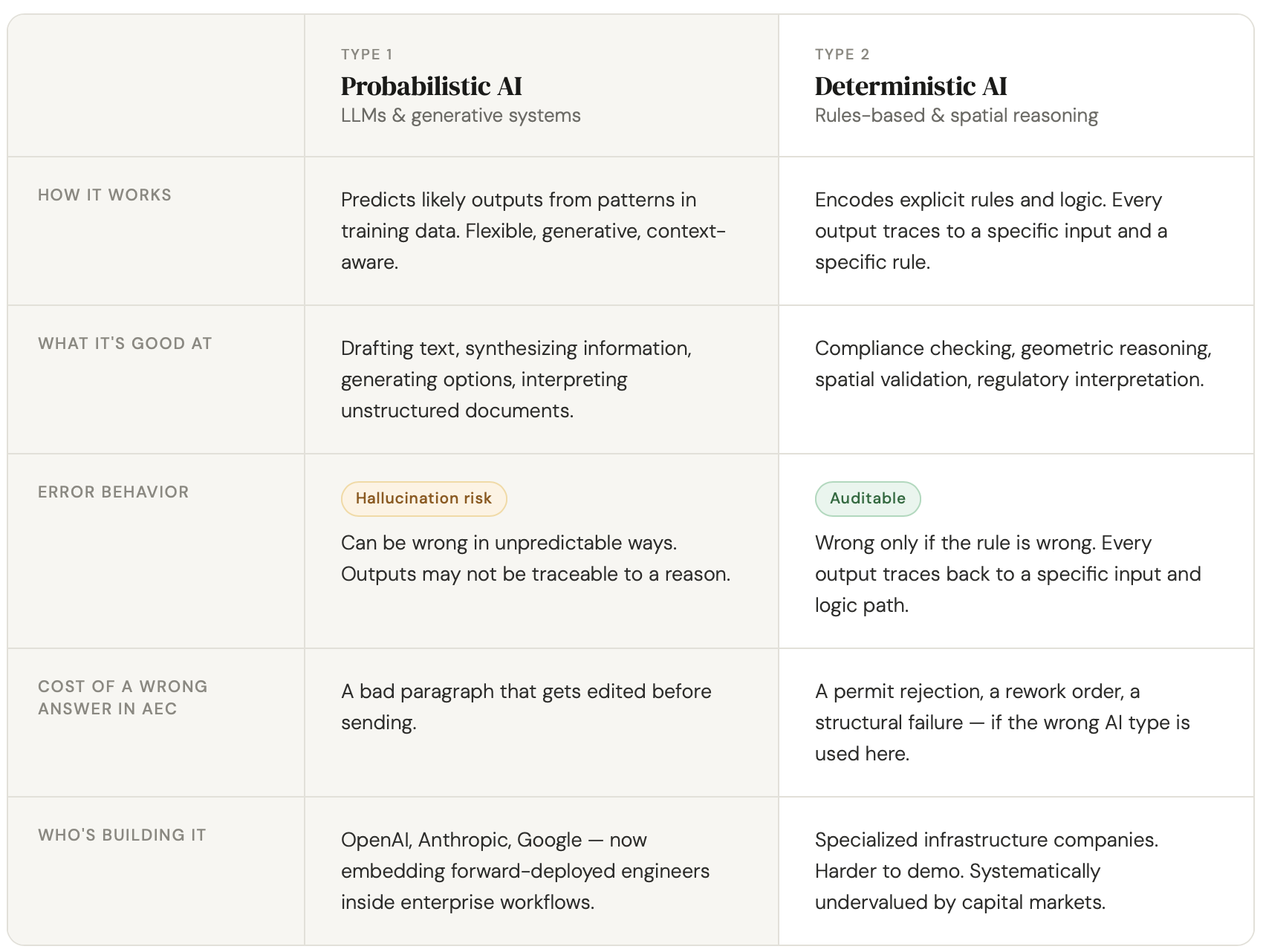

The deeper problem is that the entire public conversation treats AI as a single thing. There are two fundamentally different classes of AI system, and they are appropriate for completely different tasks.

Probabilistic AI — large language models, generative systems, the technology that powers ChatGPT and its descendants — works by predicting likely outputs based on patterns in training data. It is genuinely powerful for tasks where flexibility and pattern recognition matter: drafting text, synthesizing information, generating options, interpreting unstructured documents. It is also wrong some percentage of the time, in ways that are not always predictable, and its outputs cannot always be traced to a specific reason.

Deterministic AI works differently. It encodes rules and logic explicitly. Every output traces to a specific input and a specific rule. It does not hallucinate. It does not vary based on model behavior. In domains where the cost of a wrong answer is a permit rejection, a rework order, a failed inspection, or a structural failure, this distinction is not an abstract technical preference. It is the line between a system you can trust and one you cannot.

The current AI narrative collapses this distinction entirely. When Dario Amodei warns that AI could eliminate half of all entry-level white-collar jobs within five years — a projection he made to Axios last year that drove the "white-collar bloodbath" framing now embedded in every industry conversation about AI⁴ — he is talking about probabilistic AI and its descendants. When architecture firms worry about AI replacing designers, they are imagining generative systems. When lenders think about AI in underwriting, they picture language models reading loan applications.

None of those conversations leave room for the harder and more consequential question: for this specific task, with these specific stakes, which type of AI reasoning actually produces the outcome we need? The answer is rarely one or the other exclusively. LLMs belong in AEC workflows where synthesis, interpretation, and context matter. Deterministic systems belong where accuracy, traceability, and auditability are non-negotiable. The problem is not that LLMs are being used — it is that the decision about when to use them, and when not to, is being skipped entirely. Speed of output is not the same thing as quality of outcome.

Consider what the wrong AI looks like in practice. A general contractor using an LLM-based plan review tool runs a set of construction documents through the system. The tool returns a summary: confident, readable, formatted like a finding. What it cannot tell you is whether the egress path on Sheet A-201 actually clears the minimum required width when accounting for the door swing shown on Sheet A-401 and the equipment clearance specified in the mechanical drawings three hundred pages later. That determination requires geometric reasoning across the full document set, not pattern recognition across training data. The tool gave an answer. The answer was plausible. The field discovered the problem six weeks into construction. That is not an edge case. That is what happens when a system optimized for fluency is deployed in a workflow that requires accuracy — and when nobody in the room knew to ask whether a different architecture was warranted. According to the Construction Industry Institute, rework from exactly these kinds of coordination failures accounts for 5–10% of total project cost across the industry, translating to hundreds of billions in lost value annually.⁵

The conflation of all AI into a single narrative has a direct effect on where investment capital goes, and the effect is not neutral.

The built environment is now one of the hottest AI investment categories in the market. In Q2 2025, venture activity in construction technology hit $3.96 billion, a 75% increase over the same quarter the prior year, with nearly 70% of deals involving AI or machine learning-driven companies.⁶ In Q1 2025, AI-specific funding claimed 46% of all construction tech capital, up from 20–25% in prior years.⁷

Capital is flowing. The question is whether it is flowing to the right places.

Because large language models lowered the cost of building something that looks like an AI product, seed-stage venture is now flooded with companies that are essentially thin wrappers around foundation models. The demos are compelling. The growth metrics at early stages are real; distribution is cheap, and the market is primed to adopt anything that shows up wearing an AI badge. These companies raise at Series A valuations on seed traction, because the narrative has trained investors to move fast on anything that fits the pattern.

The companies getting systematically undervalued in this environment are the ones building harder technology — systems that don't just deploy AI, but make principled decisions about which type of AI to use for which task, and why. A spatial reasoning engine that encodes building geometry into a queryable graph, applies deterministic compliance logic where accuracy is non-negotiable, and layers LLM capabilities where synthesis and interpretation are the point is not easy to explain in a pitch meeting. It does not have the same demo appeal as a chatbot that talks to your blueprints. The defensibility is real: patent-pending infrastructure, a rules library that takes years to build, outputs that are traceable and auditable, and an architecture that is constantly being evaluated against what actually produces reliable outcomes for customers — including the legal and liability exposure those customers carry. None of that fits cleanly into the narrative frame that investors have been trained on.

This is how hype cycles allocate capital badly. Not by funding bad ideas. By funding legible ideas at the expense of important ones.

The industries that actually need AI most — construction, architecture, engineering, commercial real estate — are not going to be transformed by any single class of AI system. They are going to be transformed by the disciplined combination of multiple types of AI reasoning, deployed in the right configuration for the right task.

That is a fundamentally different value proposition than what the current market narrative describes. The question is not which AI wins. It is whether the people building and deploying these systems understand the difference between a task that rewards speed and fluency and one that demands accuracy, repeatability, and traceability — and whether they are building infrastructure that can deliver both.

AEC is one of the largest industries in the world, valued at over $12 trillion globally⁸ and one of the least digitized. McKinsey has identified construction as among the industries with the most to gain from technology adoption, yet among the slowest to capture that value.⁹ The productivity gap is not a mystery. It is the direct consequence of applying tools optimized for one kind of problem to a domain that contains many different kinds of problems, each with different requirements for what a correct answer looks like.

Consider what that looks like at the commercial real estate level. When a lender underwrites a large mixed-use development, the financial model is only as good as the assumptions about the physical asset beneath it. Today those assumptions are largely manual. Someone reviews plans, estimates unit mix, flags buildability risk, and guesses at completion timelines. That process is slow, expensive, and introduces enormous subjective variability into decisions that move hundreds of millions of dollars.

What changes when AI can reason directly over building geometry — extracting data from plans, modeling completion risk, identifying coordination conflicts before they become cost overruns, and connecting spatial intelligence directly to financial models — is not incremental. But the outputs that underwriters, lenders, and insurers can actually rely on are not the ones generated by systems optimized for fluency. They are the ones that can be traced, audited, and defended. The value is in the combination: LLM-powered interpretation where context and synthesis matter; deterministic verification where accuracy and accountability are the standard.

The same principle applies across the AEC lifecycle. Compliance checking. Buildability verification. Coordination conflict detection. Egress path analysis. These are not tasks where flexibility and pattern recognition alone are the point. They are tasks where accuracy, repeatability, and traceability are the point — and where the cost of a wrong answer is measured in project weeks, profit margin, and legal exposure.

The companies building that layer are not building against LLMs. They are building the judgment infrastructure that tells you when to use one.

The tragedy of the current moment is not that AI is being overhyped. It is that the hype is so loud, and so focused on one class of AI, that the signal from the other class cannot break through.

Firms in architecture and construction are making AI adoption decisions right now, under pressure from a narrative that tells them they need to move fast or get left behind. Many of them are deploying AI without understanding which type of reasoning a given workflow actually requires — or that the choice matters at all. Not because they evaluated the options and chose wrong, but because nobody framed it as a choice.The marketing for every AI product in the AEC space sounds the same. The underlying architecture could not be more different.

The talent that left for tech took with it precisely the people who would have known to ask the right questions. The capital that flowed to legible AI startups did not flow to the infrastructure that these industries actually need. The investors who funded the wrappers did not fund the systems that know when to use one.

This is what an industry looks like when the narrative outruns the reality: not mass unemployment, but misallocated talent, misallocated capital, and a generation of tools being deployed in the wrong places for the wrong reasons.

The stakes are now rising in a way that makes this more than a market inefficiency. On May 4, 2026, Anthropic and OpenAI each announced separate, billion-dollar joint ventures to embed their engineers directly inside the operations of enterprise clients. Anthropic partnered with Blackstone, Goldman Sachs, and Hellman & Friedman in a $1.5 billion vehicle. OpenAI closed a $10 billion venture with TPG, Brookfield, Bain Capital, and 16 other investors.¹⁰ Both structures follow what Palantir pioneered as the "forward-deployed engineer" model: technical teams physically inside client organizations, redesigning workflows around their company's tools.

Those engineers are talented. But they build with the tools their companies make. Anthropic's engineers build with Claude. OpenAI's engineers build with GPT. The question of whether a deterministic system might be more appropriate for a given workflow — for underwriting, for compliance, for risk, for buildability — may never get asked. Not because anyone chose wrong, but because it was never in the frame. As Anthropic CFO Krishna Rao acknowledged in announcing the joint venture, "enterprise demand for Claude is significantly outpacing any single delivery model."¹¹ The delivery model is now the product. And the delivery model is, by definition, probabilistic.

This is how the misallocation stops being a cultural problem and becomes a structural one, written into contracts, embedded in workflows, and maintained by engineers whose incentives align with the tools they know.

Galloway is right that the job apocalypse won't happen the way it's being described. The labor market disruption from AI will be real but uneven, slower than predicted, and concentrated in the downturn rather than in the boom. Radiologist job listings in 2026 are up, not down. Coder job listings are up 11% year on year.¹² The narrative is running ahead of the data.

But the damage from the narrative is happening now, in the industries least equipped to absorb it. Architecture firms are losing technical talent to a fear that has outpaced the reality. Construction companies are buying AI software without understanding the difference between what they need and what they're being sold. Capital is flowing toward companies that tell the right story rather than companies building the right infrastructure. And now, the largest AI labs in the world are embedding their engineers directly into the industries that need a different kind of AI entirely.

The correction will come. It always does. The question is how much gets misallocated before the market figures out the difference between AI that generates outputs and AI that verifies them, and starts funding, hiring, and deploying accordingly.

When it does, the industry will need a name for the layer it was missing. That name is Buildability Intelligence™. It already exists.

Russell Goldman is the Founder of Buildable Engine, a spatial intelligence platform for the built environment, and a contributing author to The AI Edge (Amazon #1 Bestseller, 2026). Buildable Engine is featured in Forbes and quoted as an expert source in an upcoming Wired industry report.

He can be reached at russ@buildableengine.com

Sources

Buildings accumulate disconnected representations of themselves from the moment design begins — drawings, models, specifications, and field records that were never designed to stay in sync — and most of the built environment is operated, maintained, and traded against information that stopped matching physical reality the day construction began. The industry has built an entire economy of workflows to manage that drift, and the next significant shift in construction software is treating it as an infrastructure problem rather than a documentation one.

AI software costs scale in ways most construction firms haven't modeled yet. The tools doing plan review and compliance checks are mostly LLM-based, which means unpredictable costs and accuracy you can't audit. Buildable Engine is built differently: deterministic logic where it matters and "AI" only where it adds value.