By Russell Goldman, Founder of Buildable Engine · May 2026

A few months into 2026, Uber's chief technology officer discovered that his AI budget projections were obsolete before the year had properly started. The cause was Claude Code, Anthropic's AI coding assistant, whose consumption inside Uber's engineering teams had surged past every model the company had built. Engineers were paying $500 to $2,000 per month each in API costs — billed per token, by a vendor, at a rate that no internal spreadsheet had anticipated. "The budget I thought I would need is blown away already," Praveen Neppalli Naga told The Information.1

The story was reported as a cautionary tale about AI cost management. It deserves to be read as something more structural — and the construction industry, which is in the early stages of committing deeply to AI-enabled workflows, should pay close attention to what it actually reveals.

The reason Uber's AI costs outran its projections isn't that someone miscounted licenses. It's that purely probabilistic AI systems — large language models running agentic workflows — scale cost with usage in a way that is fundamentally different from traditional software. Traditional software has a largely fixed cost model: you pay for seats or access, and the cost is independent of how much thinking the software has to do. AI systems built around inference don't work that way. Every query consumes compute. Every token costs money. When usage surges, invoices surge with it, faster than anyone's spreadsheet anticipated.

This is not a pricing anomaly that smarter negotiation can solve. It is a structural property of the underlying architecture. Anthropic's recent shift to usage-based enterprise billing — which analysts estimate could double or triple costs for heavy users2 — is simply the pricing model catching up to what the architecture always implied.

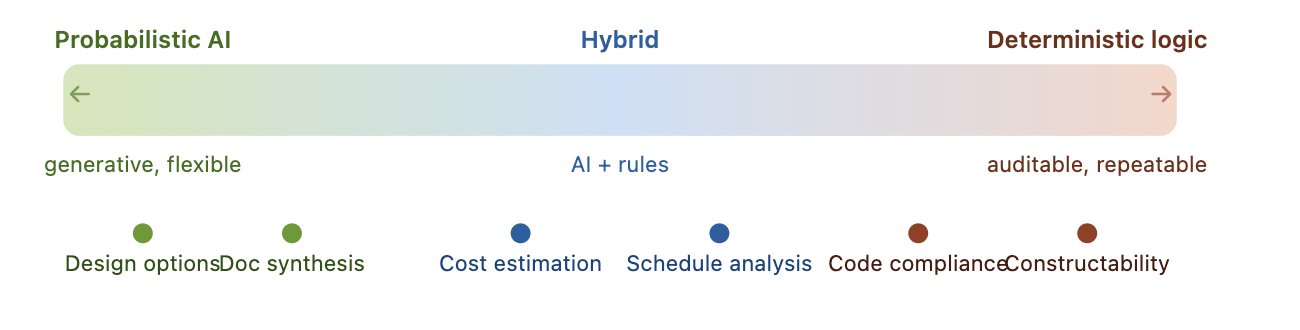

Portfolio / project volumeSoftware costBudget ceilingPure probabilistic AIHybrid modelDeterministic systemBudget exceededFigure 1 — Cost scaling by AI architecture type. Probabilistic systems (LLMs running every query) scale cost with usage and blow through budget ceilings. Hybrid and deterministic architectures stay predictable as volume grows.

For construction and AEC software, the implications are significant and almost entirely undiscussed.

The AEC industry is currently in the early stages of a major AI adoption cycle. Design generation, plan review, document analysis, cost estimation, project management — every major software category is being repositioned around AI capabilities. The pitch is consistent: more output, faster, with fewer people. The productivity gains at the pilot stage are real. Procurement teams are signing contracts.

But most enterprise AEC buyers are not yet asking the question that Uber's CTO is now asking from the other side of it: how does this cost model scale with my portfolio?

In a software company, usage scales with engineering activity. In a construction firm, usage scales with projects — and projects scale with the portfolio decisions that define the firm's entire growth strategy. A developer running ten projects today who grows to fifty projects over the next three years isn't just growing their AI usage by a factor of five. They are potentially growing it by a much larger factor, depending on how deeply AI is embedded in review, coordination, and decision-making workflows at the project level.

The firms signing AI software contracts today are signing variable-cost agreements whose total cost at scale they have not yet modeled.

This is not a criticism of their procurement decisions. The tools are new, the usage patterns are still developing, and most vendors cannot yet provide reliable guidance on what consumption looks like at portfolio scale. But the cost structure is not theoretical. It is already present in the architecture.

Most AI-enabled plan review and compliance tools on the market today are built on LLM inference. That is rarely disclosed in marketing materials, and most buyers don't know to ask. It matters — for the cost and accuracy reasons described above — and it will matter more as portfolios scale. Before deploying AI deeply into construction workflows, firms should be asking three questions that rarely appear in vendor evaluations.

How does this cost model scale? If this system is running across every project in my portfolio — every plan review, every compliance check, every document analysis query — what does the invoice look like at two times my current volume? At five times? Is the cost model per query, per document, per project? Most vendors cannot answer this clearly because they have not modeled it themselves. That is worth knowing before a contract is signed.

Where in this system is AI actually doing the work? There is a significant architectural difference between a system where a large language model runs every query and a system where AI is used selectively for ingestion and interpretation while deterministic logic handles the high-frequency, high-stakes operations. The first architecture scales cost with usage. The second does not — or at least, it scales far more slowly and predictably. Understanding which architecture underlies a given product is not a technical detail. It is a financial one.

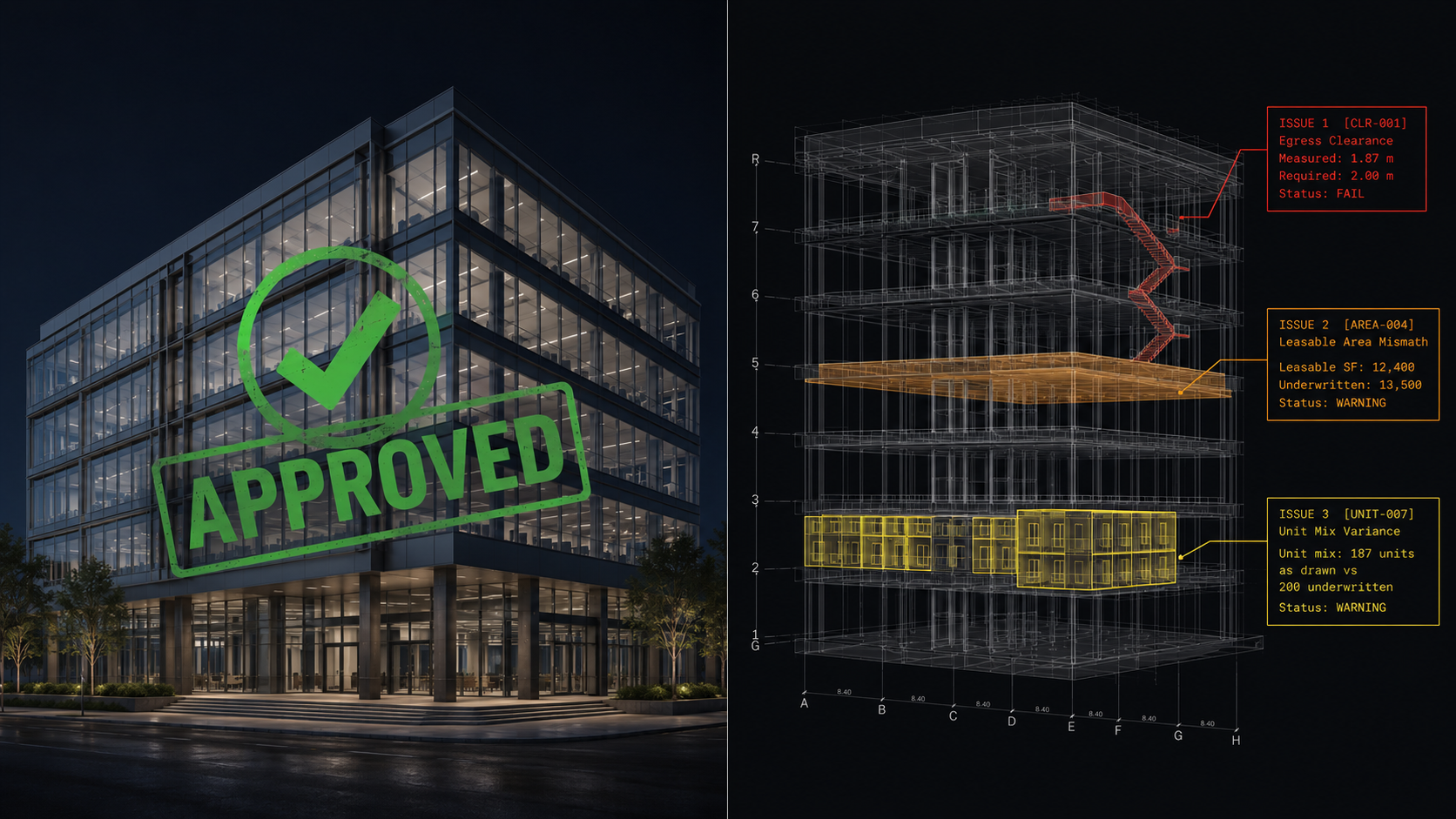

What happens when it's wrong? Every probabilistic system is wrong some percentage of the time. In enterprise software, an AI error creates a bad output that a human catches and corrects. In construction, an error in a compliance check, a constructability review, or a regulatory interpretation doesn't produce a bad document — it produces a permit rejection, a rework order, a project delay, or in the most serious cases, a safety issue. The cost of an error rate is not uniform across industries. In construction it is measured in project weeks and profit margin. Understanding how auditable a system's outputs are — whether a decision can be traced back to a specific rule and a specific piece of geometry — is not an abstract concern. It is a risk management question.

These three questions are not abstract. They apply differently at each phase of the construction workflow — and the stakes of a wrong answer vary significantly depending on where you are in the project.

The pattern is consistent across both phases: wherever AI is being used to generate options or synthesize information, probabilistic approaches are acceptable — their flexibility is a feature. Wherever AI is being used to verify whether something is correct, safe, code-compliant, or constructable, probabilistic approaches introduce unacceptable risk. The output needs to be auditable, repeatable, and traceable to a specific rule.

This distinction is not theoretical. It determines both the cost trajectory of your AI software stack and the risk profile of the decisions it supports.

There is an irony in the way this problem is presenting itself to the AEC industry, because construction already has a mature framework for thinking about exactly this question. It is called structural engineering.

A structural engineer does not design a load-bearing column using statistical approximation. The column is calculated deterministically against known physics — loads, spans, material properties, safety factors. The creativity and judgment happen at the design level. The verification happens through rigorous, traceable calculation. This is not because structural engineers lack access to probabilistic methods. It is because in a domain where the cost of being wrong is catastrophic, auditability and reproducibility are not optional features. They are the foundation of professional practice.

The same principle should govern AI architecture in construction workflows. Use probabilistic AI where flexibility and pattern recognition create value — interpreting unstructured documents, generating design options, synthesizing information from heterogeneous sources. Use deterministic systems where accuracy, auditability, and cost predictability are non-negotiable — compliance verification, constructability checking, spatial validation, regulatory interpretation.

This hybrid architecture is not a compromise position. It is the appropriate response to the domain. It also happens to produce a cost profile that scales like traditional software rather than like an inference engine — which, as the Uber story illustrates, is a distinction that matters enormously at scale.

This is the architecture we built Buildable Engine around. We use AI where it creates genuine value — reading and interpreting unstructured documents, handling the parts of a workflow where flexibility and pattern recognition matter. Where AI touches interpretation that carries real stakes, we flag it explicitly so users always know when they are looking at a probabilistic output. Everything else — compliance checking, constructability verification, spatial validation — runs on deterministic logic against a structured rules database. The result is a system whose outputs are traceable to specific rules, whose costs scale predictably with portfolio volume, and whose accuracy on high-stakes checks doesn't vary with model behavior.

The cost dynamics of pure AI systems will lead some large AEC firms and developers to consider building their own solutions. The logic is straightforward: control your cost model, own your data, customize for your specific workflows. This instinct is understandable and in some narrow cases correct. The firms that evaluate it seriously tend to arrive at a clearer picture of what they actually need from a vendor — which makes them better buyers regardless of what they decide.

What serious evaluation usually reveals is that the hidden cost of a construction intelligence system is not the software. It is the rules library — the jurisdiction-specific, version-controlled, continuously updated representation of building codes, accessibility standards, fire and life safety provisions, and zoning regulations that the system needs to evaluate designs against.

Building codes change. Jurisdictions vary. New versions of the IBC, the IRC, and local amendments are released on regular cycles. Maintaining a comprehensive, current, multi-jurisdictional rules library is not a software development problem. It is an ongoing operational commitment that requires domain expertise, systematic monitoring of regulatory changes, and continuous validation against real projects. Most AEC firms are not in the software business. The firms that try to internalize this infrastructure often discover, after significant investment, that the maintenance burden is the actual product.

The sophisticated question for most firms is not build versus buy. It is: do I understand the cost model of what I'm buying, do I know where the rules come from and how they're maintained, and is the underlying architecture designed in a way that will scale predictably as my portfolio grows? The firms asking those questions are the ones who end up with infrastructure that still makes sense three years from now.

The AI cost problem that surfaced at Uber in early 2026 is not a technology company problem. It is a structural property of how probabilistic AI systems are built and priced, and it will surface in every industry that deploys these systems at scale. Construction is earlier in the adoption curve than software engineering, which means the industry has a window to ask better questions before the invoices arrive.

The firms that navigate this well will not necessarily be the ones that adopt AI most aggressively. They will be the ones that understand the distinction between where probabilistic inference creates value and where deterministic, auditable, cost-predictable systems are required — and that build their AI workflows accordingly.

That distinction is not new to construction. The industry has always known that some decisions require judgment and some require calculation. The best AI architectures for construction aren't the ones that do the most — they're the ones that know the difference. The same logic applies to the software the industry is now deploying at scale.

If you are a developer, GC, architect, or lender evaluating AI-enabled construction software — and you want a clear framework for pressure-testing the architecture and cost model of what you're being sold — we would welcome the conversation. That is exactly the kind of due diligence we think the market needs more of, and we are happy to be part of it whether or not Buildable Engine ends up being the right fit. If you are a technology investor who believes the underlying architecture of a construction AI platform will determine which ones survive at scale, we would welcome that conversation as well.

Reach us at support@buildableengine.com.

Sources

Buildings accumulate disconnected representations of themselves from the moment design begins — drawings, models, specifications, and field records that were never designed to stay in sync — and most of the built environment is operated, maintained, and traded against information that stopped matching physical reality the day construction began. The industry has built an entire economy of workflows to manage that drift, and the next significant shift in construction software is treating it as an infrastructure problem rather than a documentation one.

An important but underappreciated distinction exists between probabilistic AI (large language models that generate plausible outputs) and deterministic AI (systems that encode explicit rules and produce auditable findings) — and the construction, architecture, and engineering industries are deploying the wrong one at scale, misallocating both talent and capital in the process. The problem is now becoming structural: the largest AI labs in the world are embedding their engineers directly inside the industries that need a fundamentally different kind of AI, locking in the wrong infrastructure before the market has learned to tell the difference.