An architect updates a wall assembly late in design development. The revised drawing is uploaded to the project folder. The structural consultant is still referencing the previous PDF set. The specification manual still reflects the older assembly. The contractor submits pricing based on outdated information. During permitting, the jurisdiction flags a fire-rating conflict between the drawing set and the submitted specifications. An RFI is issued. Coordination meetings follow. The schedule slips.

None of this is unusual. It is also, notably, not a technology failure. Every person in that sequence was doing their job. The drawings were updated. The consultant was reviewing documents. The contractor was pricing from what they had. The problem was not incompetence — it was that the information systems underlying the entire workflow were never designed to stay in sync.

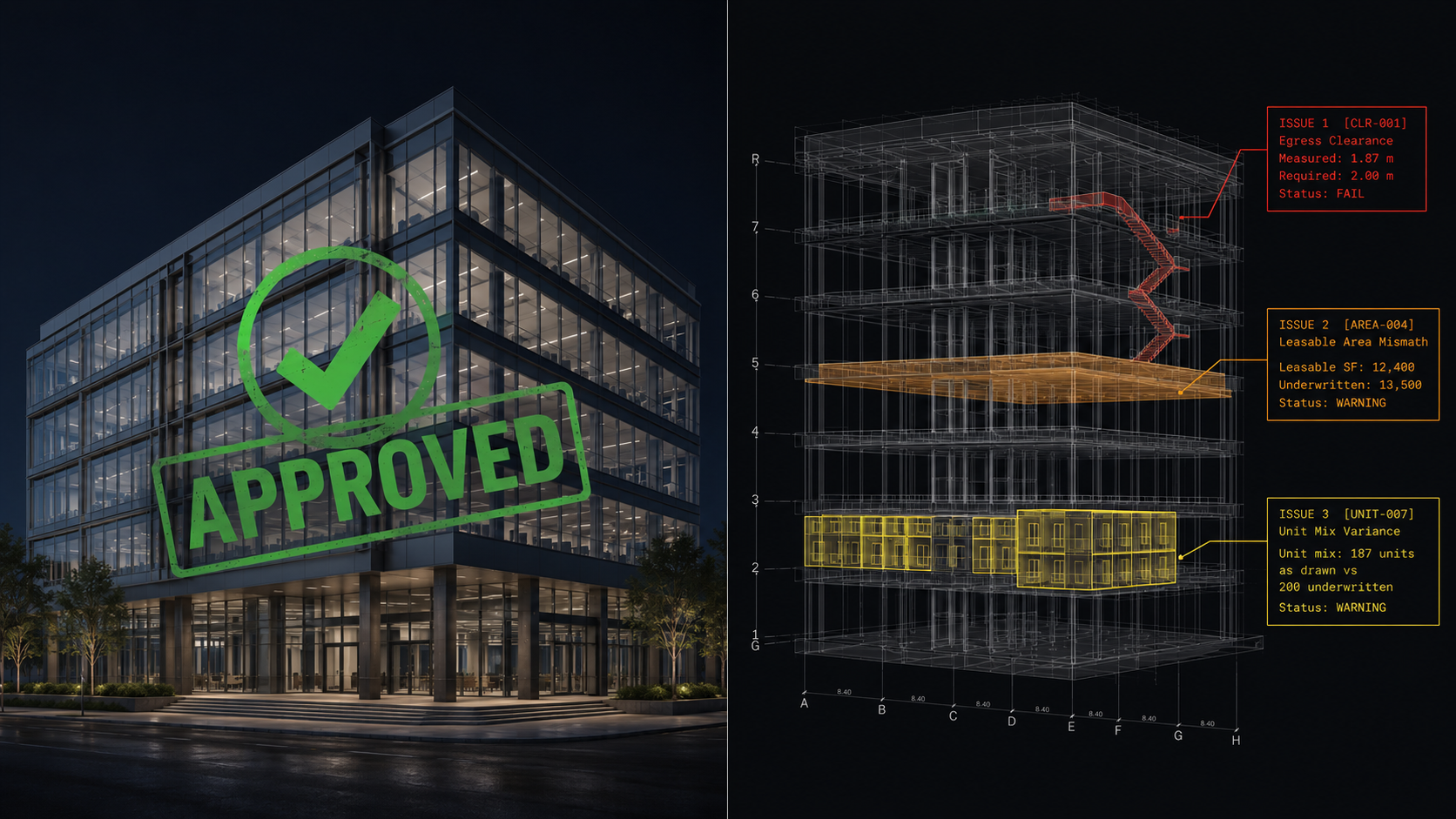

For all the discussion around AI, robotics, and digital transformation in architecture and construction, this remains the foundational problem: the industry runs on disconnected representations of the same building. Drawings. BIM models. Specifications. Schedules. Code reviews. Product submittals. Energy analyses. Consultant packages. Permitting documents. Each one is an attempt to describe the same physical reality from a different vantage point. And each one is capable of drifting the moment any other one changes.

According to the Construction Industry Institute, deviations and rework account for more than 12% of total installed project cost on large construction projects.[1] A separate FMI and PlanGrid study found that construction professionals spend roughly 35% of their time on non-optimal activities: searching for project information, resolving conflicts, dealing with mistakes caused by information that didn't agree.[2] These numbers are not aberrations. They are what it costs to run an industry with too many sources of truth.

Once you see construction this way, the scaffolding becomes visible everywhere. RFIs don't exist because contractors ask too many questions. They exist because the documents contain information that is incomplete, inconsistent, or conflicting, and someone has to formally resolve it. Coordination meetings don't exist because project teams lack communication skills. They exist because separate firms maintain separate interpretations of the same building, and someone has to manually align them at regular intervals. Submittals exist because product selections, specifications, engineering assumptions, and code requirements all live in different systems with no structural relationship to one another, and every one of them has to be verified by hand before anyone trusts them.

The industry has not built these workflows because they are efficient. It has built them because they are necessary. They are the operating infrastructure of a system that cannot keep itself in sync any other way.

BIM was supposed to change this. And to its credit, it changed it significantly — better visualization, better coordination, fewer conflicts discovered in the field. But BIM produced a more sophisticated document, not a single source of truth. The model lives in one authoring environment and gets exported to PDFs for consultants on different platforms. Specifications are maintained in separate systems with no live connection to geometry. Field conditions get reconciled against the model by project managers carrying the gap in their memory. Construction begins, and from that point forward, the model and the building diverge — and that divergence is where the more consequential and less-discussed problem begins.

The information failures most commonly discussed in AEC happen during design and pre-construction: coordination conflicts, code issues, specification mismatches. These are serious and expensive, and they are also the part of the lifecycle that has received the most analytical attention, the most software investment, and the most process improvement effort. The information failure that has received almost none of that attention happens after construction begins and continues for the entire operating life of the building.

Field conditions diverge from design intent the moment work starts. Contractor modifications, value engineering substitutions, coordination-driven adjustments, and unforeseen site conditions all alter the building from what was drawn. Most of these changes are minor in isolation. Few of them are systematically captured in any persistent, structured record. By project closeout, the as-built condition of the building (what was actually constructed) is documented, if at all, through a set of redline markups on paper drawings, PDF annotations made by field supervisors, and the institutional memory of people who will eventually leave or retire.

What follows is decades of operating a physical asset against information that doesn't match it. Facilities managers run preventive maintenance against equipment locations that may have shifted during construction. Capital planning decisions rest on square footage calculations derived from design documents that don't account for as-built modifications. When a tenant requests a renovation, the contractor doing the work often begins by spending significant time and cost on field verification, measuring spaces, locating systems, identifying conditions, because nobody trusts the drawings on file. When the building is eventually sold, underwriters and lenders make assumptions about the physical asset based on records that may bear only passing resemblance to what was built.

This is not an edge case or a failure of particularly disorganized projects. It is the standard operating condition of most of the built environment. Buildings are routinely operated, maintained, traded, and redeveloped on the basis of information that drifted from physical reality the day construction began and has been accumulating inaccuracy ever since.

The cost of this is diffuse and hard to measure precisely, which is part of why it receives less attention than pre-construction coordination failures. But it compounds over time in a way that pre-construction failures do not. A coordination conflict caught in design costs almost nothing to fix. A coordination conflict discovered in the field costs multiples more. A building operated against incorrect as-built data for thirty years produces decisions that are persistently miscalibrated: maintenance schedules that don't match actual system ages, capital reserves that don't match actual replacement costs, valuations that don't match actual conditions.

There is a temptation to frame this as a data-capture problem, one that could be solved by better field documentation practices, more diligent as-built markups, or newer scanning technologies. Those help at the margins. They do not address the underlying architecture.

The reason building information degrades across the lifecycle is not that people are careless about updating their records. It is that documents are structurally incapable of staying synchronized with a physical asset that changes continuously. A document is a snapshot. Once it is created, it begins to diverge from the thing it describes. Keeping a document current requires human effort applied continuously: someone actively deciding to update the record every time the physical reality changes. That is reconciliation. And the industry's entire portfolio of workflows for managing building information is, at its core, an infrastructure for doing that reconciliation at scale.

The phrase "single source of truth" has begun appearing in BIM and digital twin literature as an explicit industry objective.[3] It names the destination accurately. It also names something the document-centric model of building information cannot deliver, not because the software hasn't been good enough, but because documents are the wrong unit of truth. A building is a physical object that changes continuously from the moment design begins until the moment it is demolished. Any representation system that produces static artifacts and then asks humans to keep them aligned with a changing reality will always be fighting the same losing battle.

What would actually close this gap is a representation of the building that is itself structured, computable, and capable of maintaining relationships between geometry, assemblies, systems, compliance constraints, and operational conditions as those things change. Not a document that describes the building at a point in time. A model of the building that updates when the building changes and that can be interrogated across its full lifecycle.

This is what digital twin research has been pointing toward for years.[3] It is also why the gap between that vision and what most buildings actually have (a PDF set that reflects design intent as of permit submission, plus a pile of change orders) is one of the most consequential unresolved problems in the built environment.

Closing it requires treating building information not as a documentation problem but as an infrastructure problem. The building needs a data layer that outlives any individual project team, any individual platform, and any individual decision — one that captures what was built, not just what was intended, and is structured to support the decisions that will be made about that building for the next fifty years, not just the ones being made today. That is a longer horizon than most construction software is designed for, and it is also where the actual value is. That shift is central to what we are building at Buildable Engine. But regardless of which companies lead the category, the direction is the same. The built environment has always needed a living record of itself. The industry is finally beginning to build one.

[1] Construction Industry Institute (CII), "Costs of Quality Deviations in Design and Construction." https://www.construction-institute.org/resources/knowledgebase/knowledge-areas/quality-management/topics/rt-153

[2] FMI Corporation and PlanGrid, "Construction Disconnected." https://www.autodesk.com/construction/construction-disconnected

[3] Deloitte, "2026 Engineering & Construction Industry Outlook." Discussing digital twins and AI agents as emerging standard expectations in construction technology ecosystems.

An important but underappreciated distinction exists between probabilistic AI (large language models that generate plausible outputs) and deterministic AI (systems that encode explicit rules and produce auditable findings) — and the construction, architecture, and engineering industries are deploying the wrong one at scale, misallocating both talent and capital in the process. The problem is now becoming structural: the largest AI labs in the world are embedding their engineers directly inside the industries that need a fundamentally different kind of AI, locking in the wrong infrastructure before the market has learned to tell the difference.

AI software costs scale in ways most construction firms haven't modeled yet. The tools doing plan review and compliance checks are mostly LLM-based, which means unpredictable costs and accuracy you can't audit. Buildable Engine is built differently: deterministic logic where it matters and "AI" only where it adds value.