There is a familiar pattern in every industry when a new technology shows up.

First, it is dismissed. Then it is feared. Eventually, it becomes infrastructure.

Construction and architecture are somewhere between the second and third phase. And the hesitation is understandable — not because the technology isn't real, but because the stakes of getting it wrong are. This is an industry where errors don't stay on the screen. They move into the ground, into the framing, into the walls — and by the time they surface, the cost of fixing them has multiplied many times over. A profession shaped by that reality is going to approach new tools carefully. That's not resistance. That's judgment.

What makes this moment different from previous technology shifts is the nature of what AI is being asked to do. Earlier tools — CAD, BIM, project management software — extended what professionals could already do manually. They made existing work faster and more precise. AI is being positioned as something more fundamental: a system that can reason, evaluate, and in some cases decide. That is a different kind of claim, and it warrants a different kind of scrutiny. In a recent post, I wrote that AI is inherently probabilistic, while buildings are not (https://www.buildableengine.com/blog-posts/ai-is-probabilistic-buildings-are-not), and that tension is not theoretical. It has direct implications for how work in this industry can actually change.

The industry knows this. The professionals who work in it know this. And the conversation that is actually happening — in firms, on job sites, in building departments — is not the one being had in the broader technology press.

The public version of this conversation is about replacement. Will AI take the jobs of architects, engineers, designers? That framing generates attention, but it misses what professionals are actually concerned about. The real issue is control — specifically, professional control over outputs that carry legal, financial, and safety consequences.

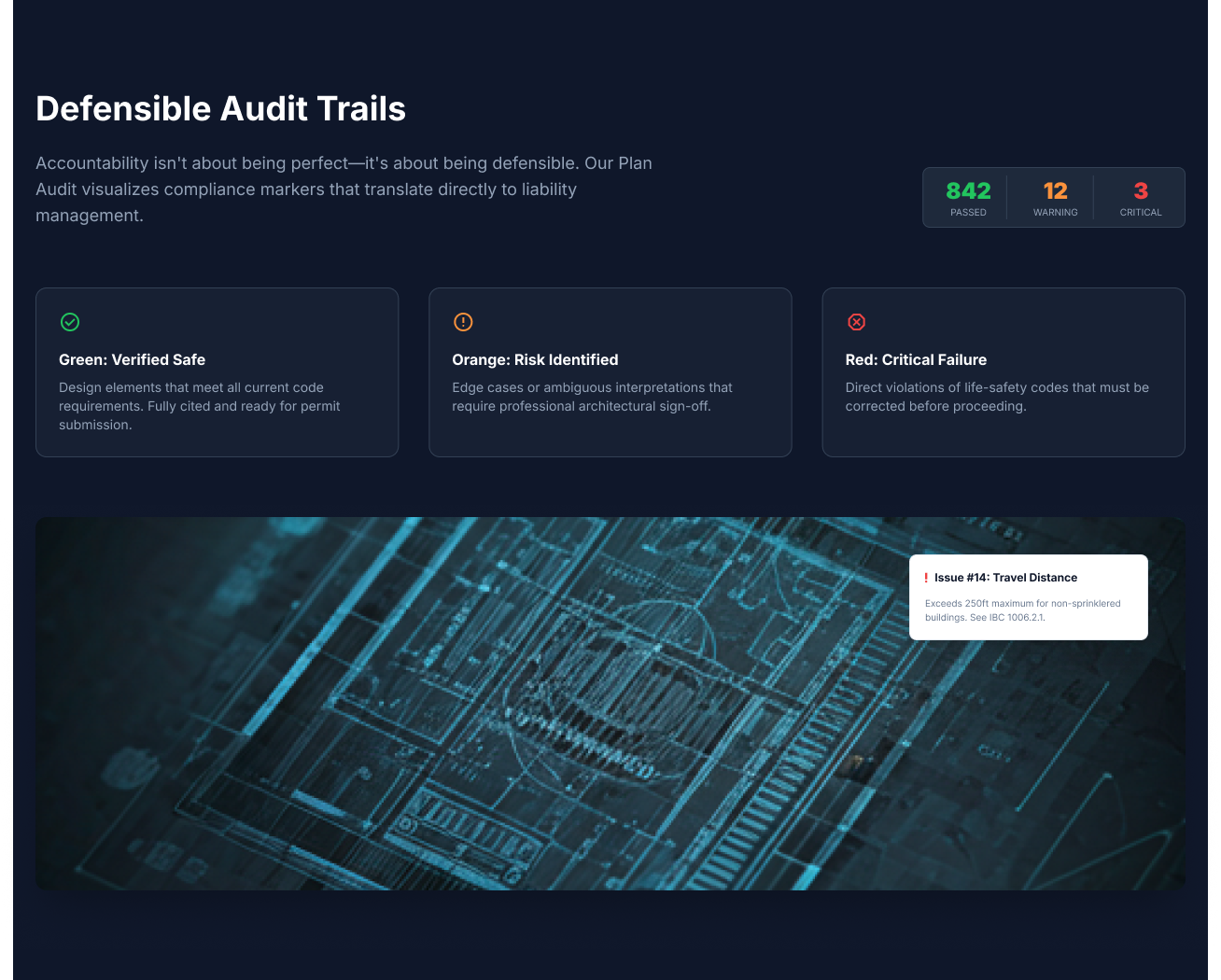

No architect, engineer, or contractor is worried about whether a tool can generate something. Generation is the easy part, but buildings do not just need to be generated; they need to be correct, and correctness is not probabilistic. What they are concerned about is whether they can stand behind the output, explain it, and take responsibility for it in front of a client, a building department, or a court if something goes wrong. The license is theirs. The liability is theirs. The signature on the drawing is theirs. A tool that produces output they cannot fully trace or defend does not reduce their exposure — it increases it.

This is not a philosophical concern. It is a professional one, grounded in how accountability actually works in this industry. And it is the reason most AI systems feel misaligned with how work happens in the built world — not because professionals are resistant to technology, but because the technology hasn't yet met the standard the work demands. Much of what is labeled "AI for design" focuses on generation, producing options, variations, and suggestions. In research with more than 50 architects and builders, we heard that early-stage exploration, massing studies, and schematic iteration were still useful to them, but generative output ultimately shifts rather than removes a burden.

The result still needs to be interpreted, validated, and owned by a human professional, and while AI can generate plausible designs, it cannot guarantee that those designs meet code, resolve across systems, or can be built without downstream conflict. For simple exploratory designs the risk is manageable, but for larger, more complex work, relying on generative tools increases risk significantly, making decisions harder to trace because an opaque intermediary now sits between the professional and the output they are accountable for. That is a worse position than where you started, and it is why the replacement conversation misses the point entirely. Replacement was never the real risk. The risk is a tool that adds ambiguity to a profession where ambiguity is already expensive.

The more useful question is not what AI can generate, but what it can reliably remove, particularly given that much of the work in this industry exists because systems cannot validate decisions early. Because buildings must be deterministic, and AI systems are not, the burden of ensuring correctness does not go away — it shifts, and becomes more central to how architectural work is structured. Professionals in this industry carry two distinct kinds of work, and it is worth being precise about the difference.

There is the work that requires judgment — decisions about what to build, how it should function, how it should relate to its site and context, and how it will be experienced by the people who use it. This is the work that draws people into the profession, where expertise compounds over a career and the difference between a good professional and a great one becomes visible.

And then there is the work that is mechanical but unavoidable: checking dimensions, validating clearances, confirming that a corridor meets egress requirements for its occupancy type, verifying that a door swing does not violate accessibility standards, and cross-referencing plans against the applicable code for the jurisdiction, the building type, and the year of adoption. These tasks are not trivial — catching a mistake at this stage is far cheaper than catching it in the field — but they are also not where the highest-value thinking lives. They are prerequisites, the table stakes that have to be met before the real decisions can be made.

The problem is that those prerequisites consume a disproportionate amount of professional time and attention, not because they are intellectually demanding, but because they are numerous, consequential, and easy to get wrong when done manually under deadline pressure. A single missed clearance can trigger a permit rejection, and a coordination error between disciplines can cascade into weeks of redesign. The cost is not just the correction itself — it is the delay, the rework, and the credibility lost with a client who expected a cleaner process.

When that layer of checking and validation moves into the background — not as approximation, but as a structured, reliable process that runs continuously, cites its reasoning, and ties every flag to a specific code and jurisdiction — something important shifts. The role of the professional does not shrink; it sharpens and, at the same time, expands in a different direction. As more of the repetitive validation and coordination work is handled by systems, more time becomes available for the work that is harder to formalize — concept, narrative, spatial experience, and design intent. Less time is spent verifying whether something is compliant, and more time is spent deciding what should be built, how it should work, and why those decisions are the right ones for this project and this client.

That is not a reduction of responsibility. It is a concentration of it. The judgment does not disappear — it moves upstream, to where it was always most valuable, and where creativity has the most impact. But that shift only happens if the underlying systems earn it, operating not as suggestions but as transparent, traceable systems grounded in the same logic that professionals are held accountable to — specific codes, jurisdictions, and versions, with every flag tied to a source that can be referenced, explained, and, if necessary, challenged. Anything short of that does not remove friction; it introduces a new kind, placing the professional in the position of having to audit the auditor.

The future of this industry is not human versus machine, but a division of labor in which machines take on what can be formalized and verified so that humans can focus entirely on what cannot. Drawn correctly, systems handle what can be formalized, measured, and validated with consistency — explicit rules, fixed dimensions, and written codes — while humans handle what requires judgment, trade-offs, and intent — decisions that cannot be reduced to a rule and solutions that emerge from experience and a deep understanding of a specific client, site, and set of constraints.

When that division holds, the effect is not replacement, but acceleration with confidence. Professionals move faster, make decisions with greater clarity, and encounter fewer errors that surface late and carry real cost. The work becomes less about catching mistakes and more about making decisions, less about defending outputs and more about producing them. As the cognitive load of verification is lifted, what remains is the work that drew people into the profession in the first place, and if that division of labor is drawn correctly, something shifts in the structure of the work itself.

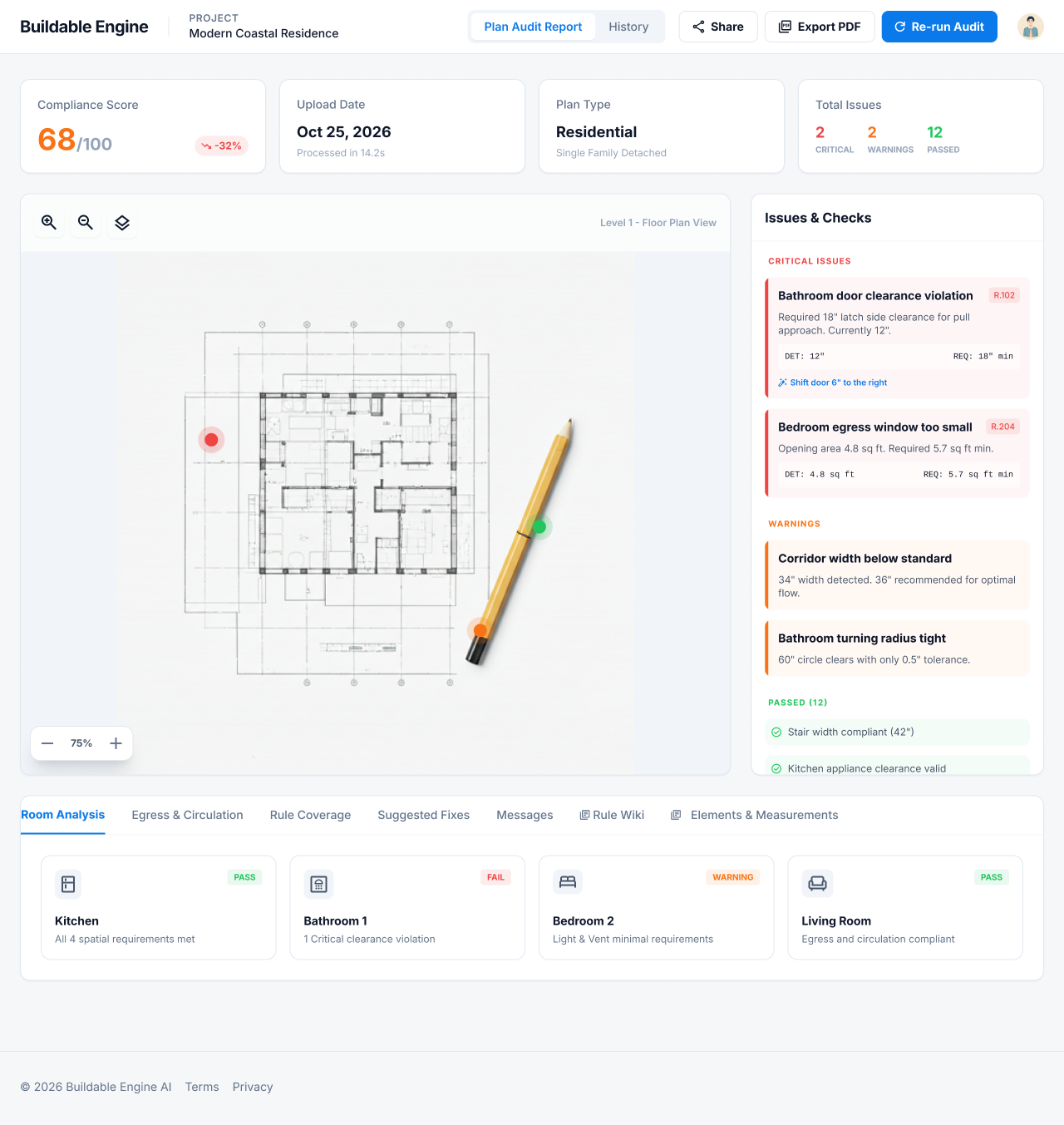

This is what Buildable Engine is built to support — not generating designs or approximating compliance, but owning the verification layer with the precision and traceability the profession requires, so that every flag cites a source, every check is traceable, and every output can be placed in front of a client or a building official without hesitation. The goal is not to replace professional judgment, but to protect the space where that judgment — and creativity — can operate at its best.

The question was never whether AI can design a building. Plenty of systems can already produce something that looks like one.

The question is whether it can remove the friction that makes designing and building one harder than it needs to be — and do so in a way that professionals can actually stand behind.

Russell Goldman is the founder of Buildable Engine.

Buildable Engine v2.0 Launches today, bringing Buildability Intelligence to the market as a working product. Here's a walkthrough of what Buildable Engine actually does: from uploading a floor plan to a compliance score, cited violations, AI-suggested fixes, and a design you can stand behind. All in seconds, before a permit is filed.

The construction industry has always had a name for what goes wrong between design and reality — RFIs, change orders, schedule float. Now it has a name for the layer that prevents it. Buildability Intelligence™ is a new category of applied AI that evaluates whether a designed object can actually be built, before a permit is filed or a foundation is poured.

AI systems in construction are built to approximate. Buildings are not. When mistakes slip through, they don’t stay abstract—they turn into rework, delays, and real cost. This piece explores why probabilistic AI falls short in the built world, and why any system that hopes to be trusted needs to be deterministic, traceable, and defensible.