A plan comes back from the building department. The corridor is 32 inches. Code requires 36. Nobody caught it. Now the framing is done.

Fixing that mistake doesn’t mean adding four inches. It means opening walls, reframing, rerouting whatever runs through them, and absorbing delays that ripple through the rest of the project. What began as a drawing error now costs real money—often tens of thousands of dollars—because it wasn’t caught when it was still cheap to fix.

That is not a hypothetical. It is a description of how rework happens—not from carelessness, but from the compounding of small errors that nobody had a reliable way to catch before they became expensive. The further a mistake travels through a project, the more it costs to fix. By the time it surfaces in the field, what began as a drawing error has become a change order.

This is the environment in which AI is being asked to prove itself in construction.

The challenge is not interest. Among architects, designers, contractors, and builders, the demand is already there—and it is specific. I know this not only from firsthand experience as a professional in the field, but also from countless interviews and conversations with colleagues working in construction. Put simply, people want to catch problems earlier. They want to reduce rework. They want to move toward permitting with confidence. When shown tools that promise to help, the reaction is consistent: they see the value immediately.

But adoption stops in the same place every time.

“I can't blindly follow this—I need to know exactly what code it's referencing.”

“We need confidence before submitting for permit.”

“Needs to be exact.”

These are not objections to AI. They are a precise description of what AI in this industry has to be in order to be usable. Flagging something as potentially wrong is helpful, but it does not resolve the problem—it shifts responsibility back to the human to interpret, validate, and decide. In a domain defined by liability, that is not enough. Most of what exists today cannot meet that bar—not because the technology is insufficient, but because it was built on the wrong foundation.

Most AI systems are probabilistic. This means they are trained to approximate—to find the most likely answer given available patterns. In many contexts, that is exactly what you want. A sentence can be slightly off without consequence. A color recommendation can be refined. In these situations, the cost of being wrong is recoverable.

In practice, this shows up in a very specific way. Most systems today identify patterns that look like violations and surface them for review. They flag, suggest, and prioritize—but they still require a human to interpret whether something is actually wrong, and what to do about it. The output is a starting point for judgment, not a resolution. That distinction matters, because it means the system is not determining whether something fails—it is identifying where it might.

But a corridor that is 32 inches when code requires 36 does not “mostly comply.” A clearance that is missed does not resolve itself. A plan that is usually correct is still wrong—and in construction, being wrong is not an abstract outcome. It becomes redesign, coordination failures, and delays that compound with every phase the error survives—costing the industry over a trillion dollars each year.

If a tool flags a violation, it has to say why—which code, which section, which jurisdiction, which version. If it suggests a fix, that fix has to be traceable to something that can be referenced in front of a client, a consultant, or a building official. The question is not just whether the output is correct. It is whether the output can be defended—against code, against jurisdiction, against the person who signs off on the work.

This is a structural requirement, not a feature request. And it is the reason most AI tools in this space feel close but not quite usable. They demonstrate value in controlled settings, but they struggle to integrate into real workflows where accountability, liability, and precision are not optional.

Any system that hopes to be used in this environment must meet that standard.

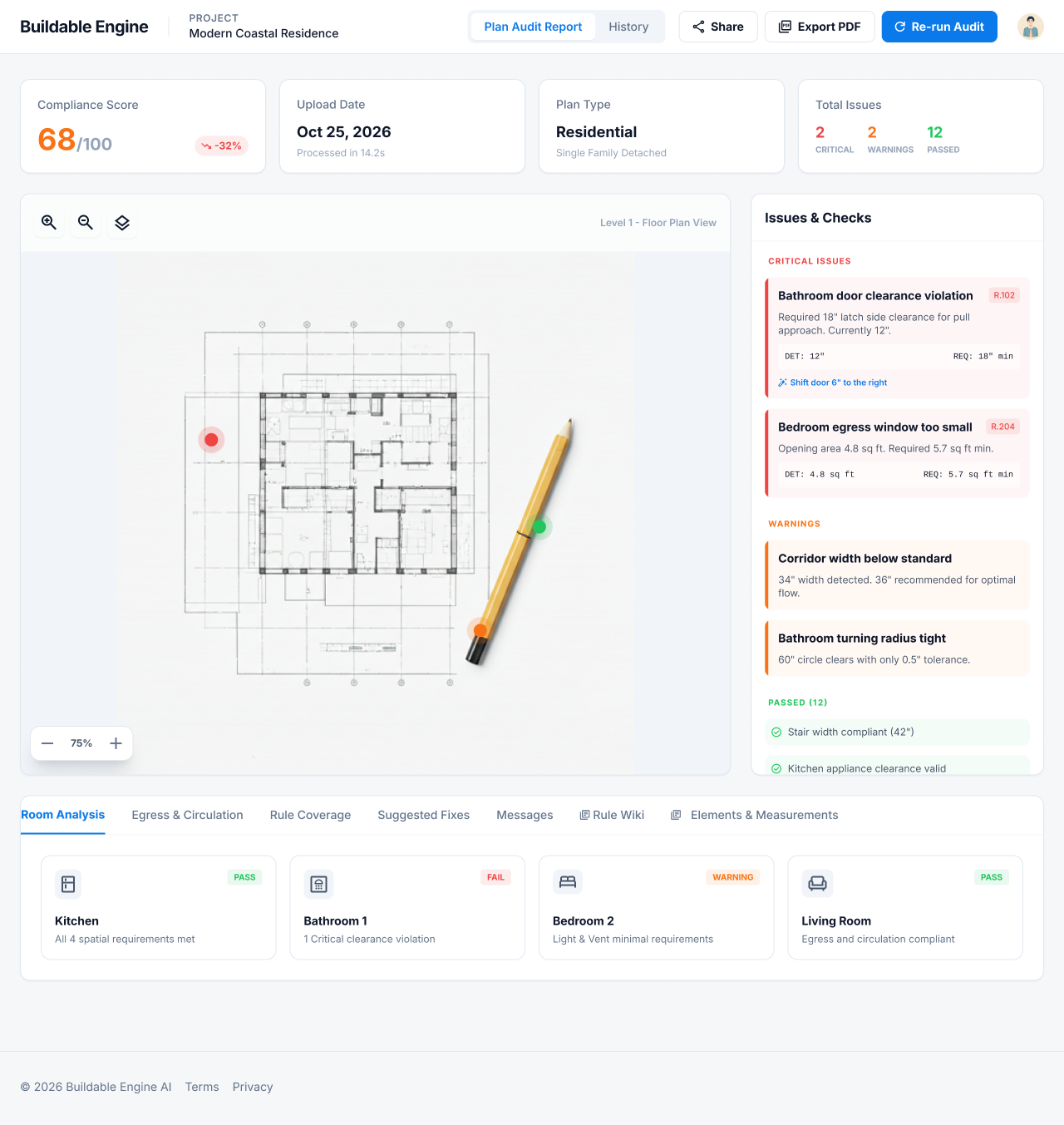

Buildable Engine was built around this idea.

Not as a system that generates and approximates, but as one that validates against explicit rules and shows how those decisions are made. It is designed not only to interpret plans as patterns, but to measure and determine exact sizes, shapes, and relationships between elements within them.

When it identifies an issue, it ties it to a specific code, jurisdiction, and requirement, and makes clear what exists, what is required, and where the gap is. Each check is traceable, and each result can be reviewed, questioned, and understood in context.

That is what determinism looks like in practice. Not AI that approximates compliance, but AI that enforces it with the precision, traceability, and accountability that professional work in the built world actually requires.

The industry has been waiting for this. Not because the technology was unavailable, but because no one built it on the right foundation.

We did.

— Russell Goldman, founder of Buildable Engine

Buildable Engine v2.0 Launches today, bringing Buildability Intelligence to the market as a working product. Here's a walkthrough of what Buildable Engine actually does: from uploading a floor plan to a compliance score, cited violations, AI-suggested fixes, and a design you can stand behind. All in seconds, before a permit is filed.

The construction industry has always had a name for what goes wrong between design and reality — RFIs, change orders, schedule float. Now it has a name for the layer that prevents it. Buildability Intelligence™ is a new category of applied AI that evaluates whether a designed object can actually be built, before a permit is filed or a foundation is poured.

The conversation about AI in architecture keeps asking the wrong question. It's not about replacement — it's about whether AI can meet the standard of accountability that professional work in the built world actually requires.